XCam

Mixed-Initiative Virtual Cinematography for Live Production of Virtual Reality Experiences

Published in ACM CHI (Human Factors in Computing Systems) 2025

Introduction

As VR technologies evolve, they are increasingly used to expand access to events, such as conferences, social gatherings, and immersive experiences that were once limited to in-person attendance. However, capturing and streaming these virtual moments remains challenging, often relying on complex setups or being restricted by a single viewpoint, which limits their expressive potential. Meanwhile, user-created videos on platforms like TikTok thrive by creatively using simple tools such as smartphone gimbals and drones. In professional filmmaking, AR/VR-based virtual production, seen in shows like The Mandalorian, is replacing traditional greenscreens with real-time immersive environments. These advancements highlight a growing need for more dynamic and cinematic VR capture solutions. In this study, we explore how cameras can enhance VR capture and live production. Drawing from social media, performance art, and filmmaking trends, we develop intuitive tools that support creative control, balancing AI automation with manual input to make virtual cinematography accessible and expressive.

Research Questions & Process

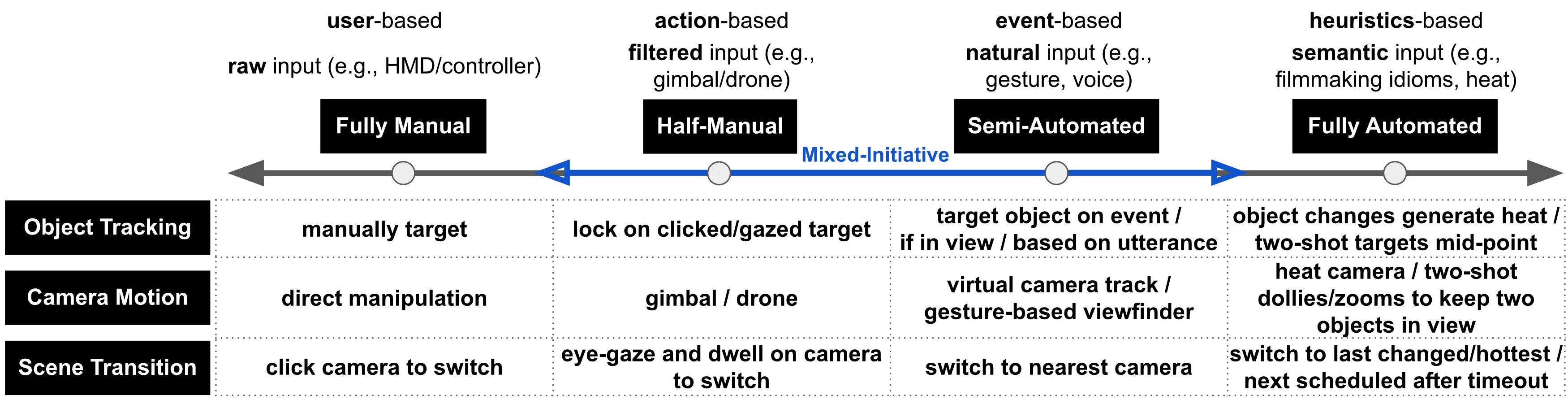

- RQ1 - Camera Control: How to construct the spectrum for virtual camera automation?

- RQ2 - Tradeoffs: What are the benefits and limitations of increasing the automation of different tasks in virtual cinematography?

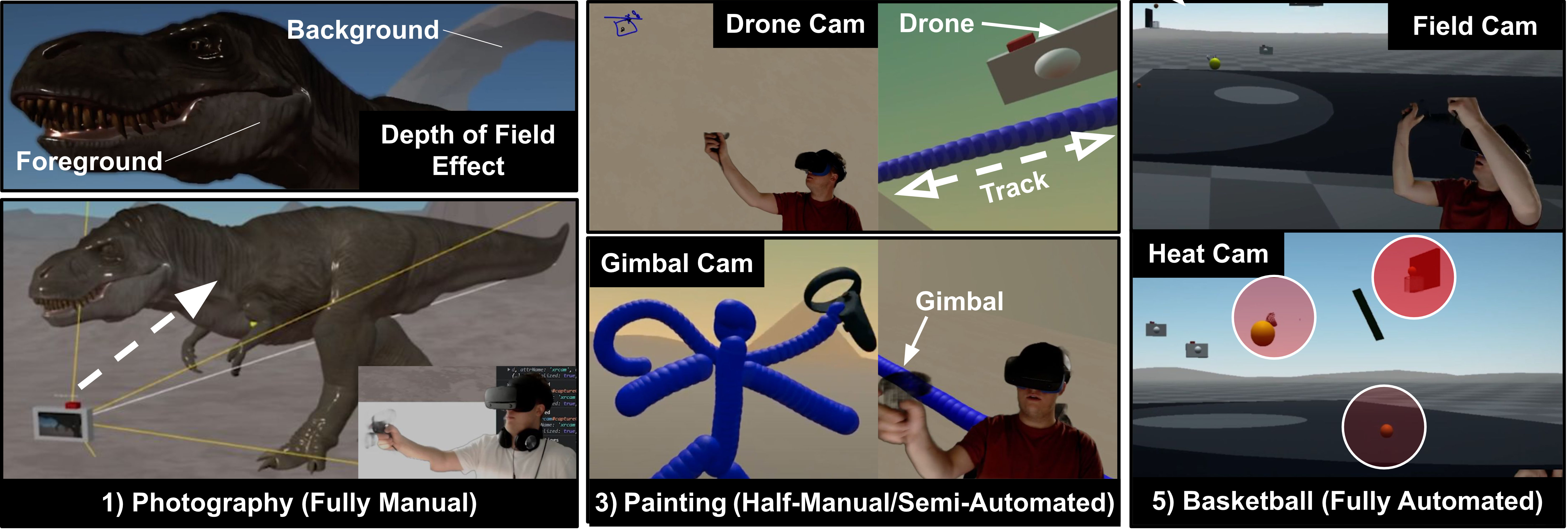

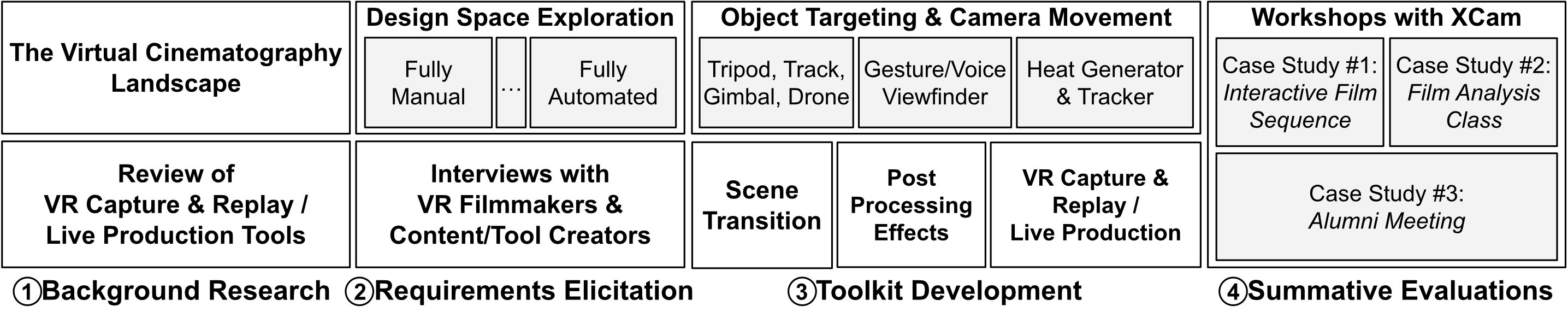

This study was conducted in four major steps. We started by studying the landscape of virtual cinematography across research work and industry practice, as well as conducting a survey of VR tools supporting capture-and-replay or live production. To elicit requirements for XCam and explore the design space of mixed-initiative control, we developed six example applications and conducted interviews with six experts in filmmaking and VR tool/content creation. Informed by these studies, we developed XCam toolkit separating the principles of object targeting, camera movement, and scene transition. Finally, we conducted individual follow-up workshops with three of the experts developing three case studies using XCam.

Research Contribution

- Design space into the spanning manual to automated virtual cinematography in VR streaming;

- A toolkit, XCam, that decouples object tracking, camera motion, and scene transition for flexible automation and control;

- Empirical insights on the benefits and limitations of increasing automation in virtual cinematography.